1. Paper Information

1.1. Authors

Rayson Laroca, Alessandra B. Araujo, Luiz A. Zanlorensi, Eduardo C. de Almeida, David Menotti.

1.2. Abstract

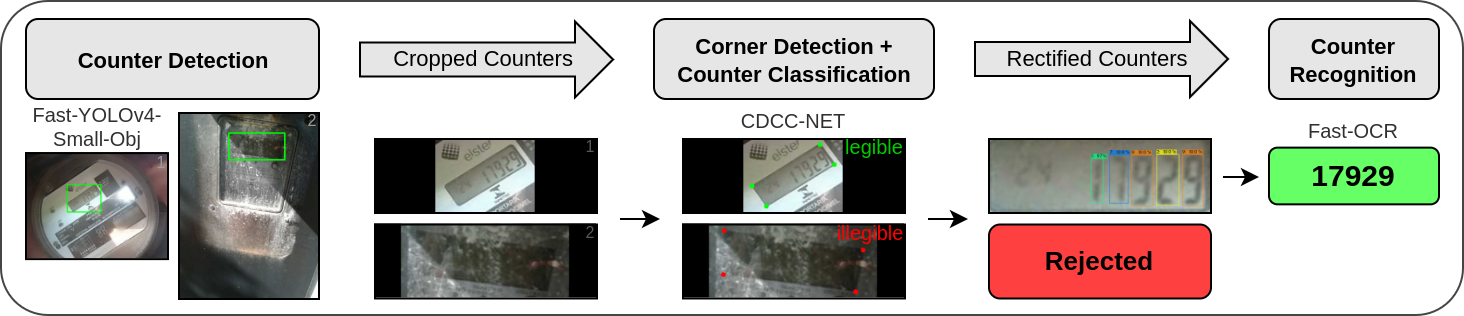

Existing approaches for image-based Automatic Meter Reading (AMR) have been evaluated on images captured in well-controlled scenarios. However, real-world meter reading presents unconstrained scenarios that are way more challenging due to dirt, various lighting conditions, scale variations, in-plane and out-of-plane rotations, among other factors. In this work, we present an end-to-end approach for AMR focusing on unconstrained scenarios. Our main contribution is the insertion of a new stage in the AMR pipeline, called corner detection and counter classification, which enables the counter region to be rectified – as well as the rejection of illegible/faulty meters – prior to the recognition stage. We also introduce a publicly available dataset, called Copel-AMR, that contains 12,500 meter images acquired in the field by the service company’s employees themselves, including 2,500 images of faulty meters or cases where the reading is illegible due to occlusions. Experimental evaluation demonstrates that the proposed system outperforms six baselines in terms of recognition rate while still being quite efficient. Moreover, as very few reading errors are tolerated in real-world applications, we show that our AMR system achieves impressive recognition rates (i.e., ≥ 99%) when rejecting readings made with lower confidence values.

1.3. Citation

If you use the models trained by us or the Copel-AMR dataset in your research, please cite our paper:

- R. Laroca, A. B. Araujo, L. A. Zanlorensi, E. C. de Almeida, D. Menotti, “Towards Image-based Automatic Meter Reading in Unconstrained Scenarios: A Robust and Efficient Approach,” IEEE Access, vol. 9, pp. 67569-67584, 2021. [IEEE Xplore] [PDF] [BibTeX]

2. Downloads

2.1. Proposed AMR System

The proposed approach consists of three main stages: (i) counter detection, (ii) corner detection and counter classification, and (iii) counter recognition. Given an input image, the counter region is located using a modified version of the Fast-YOLOv4 model, called Fast-YOLOv4-SmallObj. Then, in a single forward pass of the proposed Corner Detection and Counter Classification Network (CDCC-NET), the cropped counter is classified as operational/legible or faulty/illegible and the position (x, y) of each of its corners is predicted. Finally, illegible counters are rejected, while legible ones are rectified and fed into our recognition network, called Fast-OCR.

The YOLO-based models (i.e., Fast-YOLOv4-SmallObj and Fast-OCR) were trained using the Darknet framework, while CDCC-NET was trained using Keras. The architectures and weights can be downloaded here (.zip file) or through the links below:

- Counter Detection (Fast-YOLOv4-SmallObj): network descriptor (architecture), weights, data descriptor, classes

- Corner Detection and Counter Classification (CDCC-NET): Keras model (architecture + weights + optimizer state)

- Counter Recognition (Fast-OCR): network descriptor (architecture), weights, data descriptor, classes

- Miscellaneous: README

2.2. Copel-AMR Dataset

The proposed dataset has six times more images and contains a larger variety in different aspects than the largest dataset found in the literature for the evaluation of end-to-end AMR methods. It also contains a well-defined evaluation protocol to assist the development of new approaches for AMR as well as the fair comparison among published works.

Full details regarding the dataset, including download instructions, can be seen here.

2.3. Additional Annotations

As the UFPR-AMR dataset, available here, does not have any annotations related to the corners of the counters, we manually labeled their positions in its 2,000 images so that we can use images from both datasets (i.e., Copel-AMR and UFPR-AMR) to train and evaluate the CDCC-NET model. These annotations are provided along with the Copel-AMR Dataset.

3. Related Work

You may also be interested in our previous research, where we introduced the UFPR-AMR dataset:

- R. Laroca, V. Barroso, M. A. Diniz, G. R. Gonçalves, W. R. Schwartz, D. Menotti, “Convolutional Neural Networks for Automatic Meter Reading,” Journal of Electronic Imaging, vol. 28, no. 1, p. 013023, 2019. [Webpage] [SPIE Digital Library] [PDF] [BibTeX] [Copyright Notice]

A list of all papers on AMR published by us can be seen here.

4. Contact

Please contact the first author (Rayson Laroca) with questions or comments.